The ALS is back in user operation after more than two months of shutdown. Major achievements include the mechanical installation for the new booster bend power supplies and the two power supplies for the accumulator ring radio frequency system. Read more »

Features

LAAAMP-Funded Team Makes a Journey of Miles and Nanometers

Celline Awino Omondi and Miller Shatsala traveled over 9,400 miles to study samples at the nanometer scale. Through a LAAAMP grant, the Kenyan scientists spent two months at Berkeley Lab preparing and characterizing perovskite samples—work that they hope will bring solar energy to Africa. Read more »

HyMARC Aims to Hit Targets for Hydrogen Storage Using X-Ray Science

Understanding how materials absorb and release hydrogen is the focus of the Hydrogen Materials Advanced Research Consortium (HyMARC). At the ALS, the HyMARC Approved Program was recently renewed, underscoring the key role that soft x-ray techniques have played in addressing the challenges of hydrogen storage. Read more »

2023 ALS User Meeting Returned On-Site to Focus on the Future

For the first time in four years, users, staff, and other members of the scientific community gathered in person for the ALS User Meeting. With a new ALS director in place, the 30th anniversary of first light a month away, and ALS-U construction well underway, the occasion was primed to discuss the long-term future of the facility. Read more »

Slavo Nemsak Receives 2023 Klaus Halbach Instrumentation Award

The ALS Users’ Executive Committee awarded the 2023 Klaus Halbach Award for Innovative Instrumentation to Slavo Nemsak for “the development of the state-of-the-art combined scattering and spectroscopy setup at ALS Beamline 11.0.2.” Read more »

Lori Tamura Receives 2023 Tim Renner User Services Award

After 25 years of science writing at the ALS, Lori Tamura has a tried and true method for clearly communicating complex scientific concepts. With her care and skill, she has increased the impact and reach of results from ALS work, and the UEC has recognized that impact with the 2023 Tim Renner User Services Award. Read more »

Will Chueh to Receive the 2023 Shirley Award

Will Chueh of Stanford University is the 2023 winner of the Shirley award for Outstanding Scientific Achievement at the ALS. His selection recognizes Chueh’s deep contributions in operando soft x-ray spectromicroscopy for imaging electrochemical redox phenomena—images and movies for battery and electrocatalytic reactions. Read more »

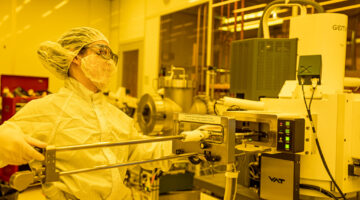

How Scientists Are Accelerating Next-Gen Microelectronics

A new center, called CHiPPS—or the Center for High Precision Patterning Science—led by Berkeley Lab microelectronics expert Ricardo Ruiz, could accelerate the next revolution in microchips, the tiny silicon components used in everything from smartphones to smart speakers, life-saving medical devices, and electric cars. Read more »

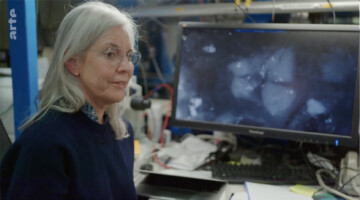

ALS Work on Roman Concrete Highlighted in German-French Documentary

A study on the remarkable durability of 2000-year old Roman concrete, by ALS user Marie Jackson with ALS beamline scientist Nobumichi Tamura, was recently highlighted in “Miracle Materials,” a science documentary produced by a German-French company, Gruppe 5, for airing on the Eurpean public service channel, ARTE. Read more »

Catching up with ASPIRES Interns from 2022 and 2023

The ASPIRES program is a 10-week paid internship matching undergraduate students from California State East Bay with mentors in Berkeley Lab’s Energy Sciences Area. We caught up with some of the ASPIRES interns from 2022 and 2023 to hear about their internship experience and what they’re working on! Read more »

- 1

- 2

- 3

- …

- 11

- Next Page »